This article was paid for by a contributing third party.

‘Intelligent orchestration’ – how insurers are connecting AI, workflows and controls

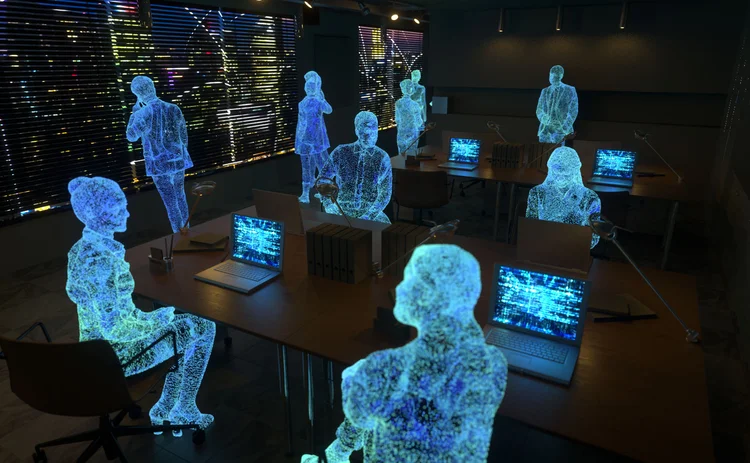

In the latest Insurance Post webinar, technology leaders discussed the challenges of AI implementation and how insurers must connect workflows, data and controls to turn hype into value.

Artificial intelligence has moved quickly from experimentation to everyday use inside insurance technology teams – embedded into code generation, testing pipelines, deployment workflows and security reviews. But faster code is only part of the story. For engineering and technology leaders, the harder challenge is making AI work reliably across the entire software delivery lifecycle, not just at the point of generation.

During Insurance Post’s recent webinar ‘Connecting the dots: maximising the potential of AI implementation in insurance’, sponsored by GitLab, a panel of insurance CTOs and engineering leaders explored why AI so often accelerates one part of the pipeline while creating new bottlenecks, risks and governance gaps elsewhere.

The consensus was clear: success in AI-enabled software delivery is less about choosing the right tool, and more about orchestration, platform discipline, and embedding controls directly into the workflows where engineers work.

Joined-up thinking, guardrails permitting

Pankaj Kane, chief engineer at Admiral Group, noting the growing use-cases in insurance, highlighted some of the challenges that can arise in the software development lifecycle, such as with code generation.

“There is a real benefit in generating code faster,” he said. “But you need to think end-to-end otherwise you’re just moving bottlenecks from one place to another.” Teams may celebrate faster development, only to find the real pressure has simply moved downstream into security reviews, QA testing, governance sign-off, and rework.

Kane also stressed the need for clear guardrails to minimise AI misbehaviours. AI can generate what he described as “code bloat”, producing large blocks of functionality that are technically correct but unnecessary.

According to Martin Young, CTO at Convex Insurance, adoption needs to be encouraged, even when tools are available. Teams may revert to familiar habits unless organisations are deliberate about shifting working practices. “We’ve found that engineers tend to revert to previous ways of working,” he said.

As AI becomes more deeply embedded, Kane also warned insurers to keep an eye on the growing security risks created by connecting models into toolchains and workflows at scale.

“As LLMs grow and you add more connections, the attack surface increases,” he said.

There is a real benefit in generating code faster... But you need to think end-to-end otherwise you’re just moving bottlenecks from one place to another.

Pankaj Kane, Admiral Group

Keeping tabs on data

For Tim Gough, CTO at Simplyhealth, the rapid proliferation of models is creating governance challenges, particularly around data residency and sensitive information. Insurers are not just managing technology adoption, but the increasing complexity of where sensitive data flows, how it is stored, and which suppliers are involved in processing it.

“Engineers need education around data residency,” he said. “Models are proliferating, and we need to ensure we’re maintaining data in the right place – and that we’re not leaking customer data into the public domain.”

He also highlighted the operational effort required to maintain AI-enabled tools once they are live. In many cases, building a chatbot or assistant is relatively straightforward. The harder part is ensuring it remains consistent, explainable and safe over time, especially when prompts and workflows are created informally and not shared.

“It’s easy to build these tools and get them into the field,” he said. “But prompts are bespoke and not transferable. There’s no common language, so longer-term maintenance [across multiple projects] could be challenging.”

George Kichukov, field CTO for financial services at GitLab, argued that this is where insurers risk repeating mistakes made with earlier waves of technology adoption: scaling quickly before putting discipline around testing, security and governance.

“We can generate more code,” he said. “But are we generating more technical debt? Are our testing and security practices keeping up?”

Fragmentation a fast route to complexity

As insurers scale adoption, the panel warned disconnected AI initiatives can quickly create fragmentation. Kane said organisations risk ending up with multiple LLMs, inconsistent practices and ‘prompt sprawl’. The result is duplicated effort, less sharing of best practice, and a widening gap between what different teams understand about risk.

Bring them on the journey. Be honest about the trials and tribulations.

Martin Young, Convex Insurance

Kichukov described the problem as an “integration tax”, with each disconnected tool adding friction and reducing context. In an AI-enabled pipeline, context is everything: without it, outputs are weaker, governance becomes harder, and mistakes are easier to miss.

“If code generation happens in one tool, testing in another, deployment in a third – no system sees the full picture.”

That fragmentation, he argued, is what prevents AI from delivering consistent results at scale.

“If you can bring it together and optimise context, it can make a huge difference,” he explained. “It can move success rates from 10% to 90%.”

Culture matters – but so does structure

Asked how insurers can create an open culture where teams are not resistant to AI, the panel agreed that firms must allow for different levels of interest and engagement. Striking the right balance is particularly important in insurance, where delivery teams must innovate while maintaining regulatory discipline.

Young recommended a deliberately low-risk approach, encouraging teams to experiment in manageable, controlled steps.

“We try to keep it small and simple,” he said.

Kane said Admiral’s journey has followed a similar path. Early experimentation started from the bottom up, with teams exploring tools in a safe way. But as adoption grows, he argued organisations need a more deliberate ‘top-down’ approach if AI is to deliver measurable improvements in cycle time.

Kichukov added that one of the simplest ways to accelerate cultural change is to make success visible. Internal storytelling matters, because people are more likely to engage when they can see tangible benefits rather than abstract strategy.

“Celebrate the wins publicly and share ideas,” he said. “People see what others are doing and they see the personal benefit.”

“Start with metrics... current state, future state, phased milestones. Don’t buy into the hype – it won’t change overnight.”

George Kichukov, GitLab

Boards need realism – probably

As AI becomes a strategic priority, board-level interest is growing. But to what extent should you involve senior execs in the process without too much pressure on results? Young said the best approach is transparency: engaging boards early on rather than presenting AI projects as a fait accompli.

“Bring them on the journey,” he said. “However small. Be honest about the trials and tribulations.”

He also argued insurers must reframe expectations, because AI introduces probability into environments that have traditionally relied on deterministic answers. For boards, that requires a mindset shift: AI outputs may be accurate, but they may not always be 100% certain.

“We are moving from a deterministic world to a probabilistic one,” he said. “You might give an answer with 90% probability – it’s not always black and white.”

Kane added that board expectations are often shaped by inflated market narratives, with analyst predictions and consultancy reports driving unrealistic assumptions about how quickly transformation will happen.

“Analysts and consultancies have made big pronouncements,” he said. “Some aggressive early adopters are quietly rolling back.”

Kichukov suggested boards respond best when AI adoption is framed in measurable milestones rather than hype.

“Start with metrics,” he said. “Current state, future state, phased milestones. Don’t buy into the hype – it won’t change overnight.”

Making the value case

A key strand of the discussion was the challenge of quantifying AI’s value. Gough said for Simplyhealth, the benefits are more easily seen in areas like customer service and operational processes rather than software engineering. Those repeatable processes provide a clearer business case, because insurers can see faster response times, reduced admin burden and more consistent outcomes.

He described the impact as a step-change compared to earlier automation approaches.

“It’s like RPA on steroids,” he said. “You can build a clear benefits case for that.”

By contrast, engineering productivity can be harder to define. But Kichukov argued the first step is being honest about existing measurement gaps.

“The first question is: how do you measure productivity today?” he said. “Then you add AI on top.” Measures such as cycle time, deployment frequency, change failure rate, and time spent on manual tasks can be used to track time to value, he said.

[Operational AI processes are] like RPA on steroids... You can build a clear benefits case for that.

Tim Gough, Simplyhealth

Kichukov explained how one client, a major insurer in EMEA, saw clear workflow improvements in two areas with AI – based on enhanced testing with AI assistants, and root cause analysis of failed pipelines/deployment.

Likewise, Kane’s team has invested in software intelligence tooling to build a baseline, enabling the organisation to see where AI is reducing cycle time and where it is increasing risk or rework.

“We’ve seen a definite uptick in productivity,” he said. “Backflow reaction times have reduced drastically because we use an LLM to code-review before a request reaches an engineer.”

Compliance can’t be a bolt-on

Security and compliance featured heavily in the discussion, with Kichukov stressing insurers cannot rely on policy documents and after-the-fact controls when AI is embedded into delivery pipelines. Instead, controls need to be embedded into workflows, with automation and policy-as-code enabling consistent enforcement.

“Policies might sit in a 10-page document that’s unread or forgotten,” he said. “Auditability is a big [issue]… do we have evidence to show auditors and regulators?

“To make sure it happens comes down to platform integration when AI capabilities are embedded within a holistic platform – security and compliance control applied consistently across human and AI-generated processes.”

With explainability a major focus for regulators, Gough highlighted the importance of continuous dialogue between teams to ensure a clear decision path around policy decisions such as renewals criteria. He also warned that supply chains are becoming more opaque as suppliers layer multiple models into a single interaction.

“You don’t know how many LLMs are involved,” he said. “Some suppliers use two or three models in a single interaction.”

Conducting the orchestra

The panel argued orchestration and platform thinking will become essential as insurers move beyond isolated pilots. Kane said the goal is to reduce cognitive load for engineers and avoid each team reinventing how they connect AI into toolchains.

“I don’t want engineers figuring out how to connect to an LLM,” he said. “Or how to join it to Jira or toolchains. It needs to be instrumented in a consistent way.”

Kichukov said orchestration is what enables insurers to keep both humans and AI working “in the flow”, while ensuring security, testing and governance remain visible across the value stream.

“When you look at value stream visibility from plan to production, that’s when the real value happens,” he said.

Changing team dynamics

Asked how AI is changing teams, Young said insurers are already seeing demand grow for architecture-level roles, as organisations look to reduce fragmentation and design scalable operating models.

“True data scientists are also in demand,” he added.

Pushing back against the idea AI will allow businesses to operate without engineers, Gough warned that maintenance and oversight remain significant challenges.

“We’re not at the stage where the business can write prompts off the hook,” he said.

Kane predicted teams may reduce in size over time, but getting the right balance and output will be challenging.

“The expectation is squads will become smaller delivering the same output,” he said. “But how you make it scalable with guardrails is still to be decided.”

For Kichukov, thinking about how team dynamics will evolve in the future is “scary but exciting”.

“System thinking, business domain knowledge, SDLC fundamentals and architecture-level thinking” will be among the core requirements, he said. “…All examples of a mature skill set that will endure”.

Sponsored content

Copyright Infopro Digital Limited. All rights reserved.

As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (point 2.4), printing is limited to a single copy.

If you would like to purchase additional rights please email info@postonline.co.uk

Copyright Infopro Digital Limited. All rights reserved.

You may share this content using our article tools. As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (clause 2.4), an Authorised User may only make one copy of the materials for their own personal use. You must also comply with the restrictions in clause 2.5.

If you would like to purchase additional rights please email info@postonline.co.uk